Crawl Budget Optimization: Make Every Bot Visit Count

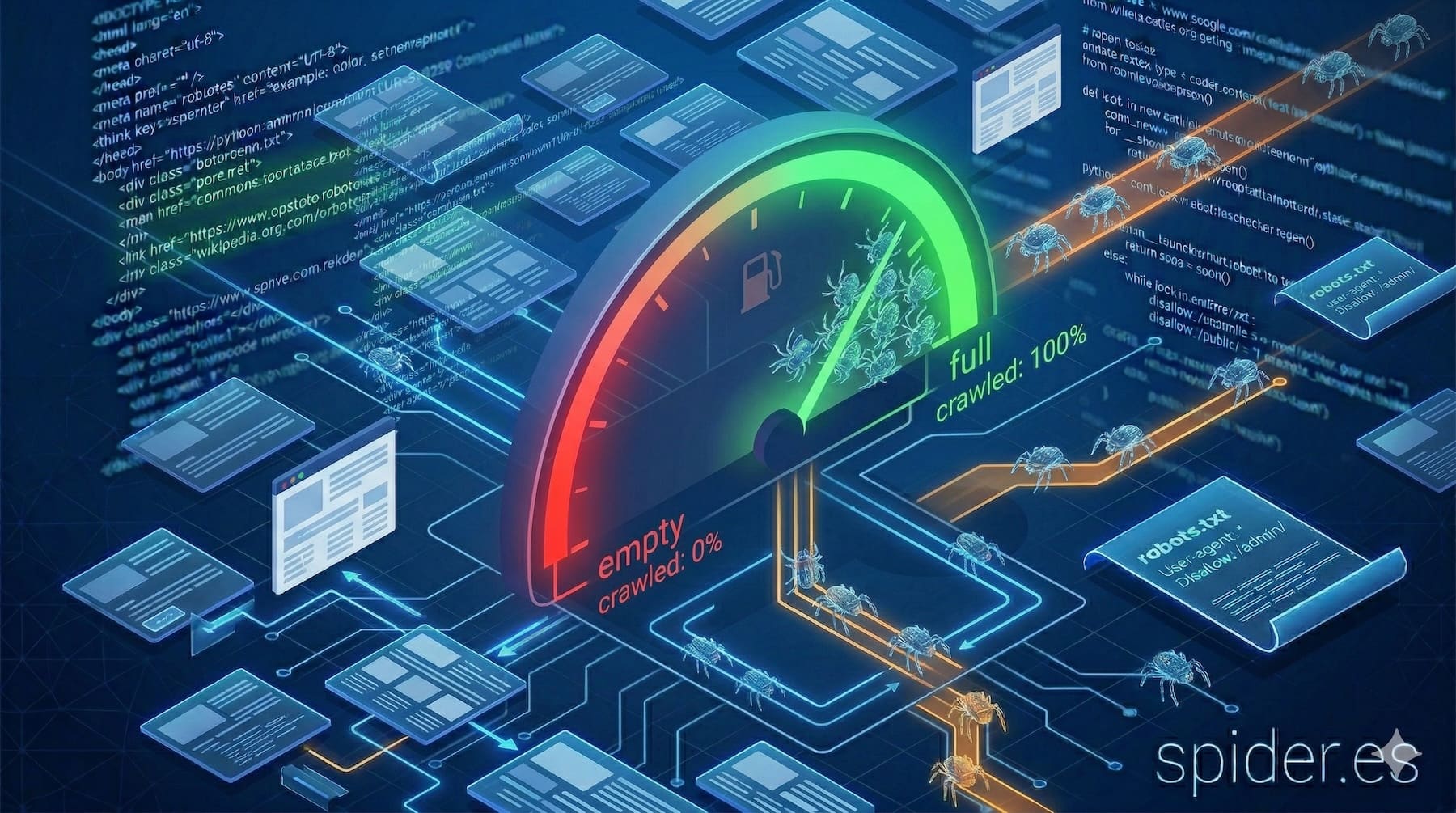

Search engine bots do not have unlimited time or resources. Every site receives a finite amount of crawling attention, and how that attention is spent determines which pages get discovered, indexed and kept fresh. This finite allocation is called crawl budget. For small sites with a few hundred pages, it rarely becomes a bottleneck. But for e-commerce stores, news publishers, SaaS platforms with user-generated content or any site that grows beyond a few thousand URLs, crawl budget optimization is a critical SEO discipline.

What crawl budget actually is

Google defines crawl budget as the intersection of two factors:

Crawl rate limit

This is the maximum number of simultaneous connections and requests Googlebot will make to your server without degrading the user experience. It is determined by your server's response speed and stability. If your server responds quickly with 200 status codes, the crawl rate goes up. If it starts returning 5xx errors or slowing down, Googlebot automatically backs off to avoid overloading your infrastructure. You can also adjust the crawl rate in Google Search Console, though this only sets a ceiling—it does not force Googlebot to crawl more.

Crawl demand

This is how much Google wants to crawl your site, driven by two signals: popularity (pages with more inbound links and user engagement attract more crawling) and staleness (pages that change frequently are re-crawled more often to keep the index fresh). A news homepage that updates every ten minutes gets far more crawl demand than an unchanging "About Us" page.

Your effective crawl budget is the lower of these two. A fast server with low demand still gets modest crawling. A high-demand site on a slow server hits the rate limit before all important pages are reached. The goal of optimization is to ensure that within whatever budget you receive, bots spend their visits on the pages that drive business value.

What wastes crawl budget

Before optimizing, you need to identify the common drains. These are the issues that cause bots to burn visits on pages that add nothing to your search presence.

Parameter URLs and faceted navigation

An e-commerce site with filters for colour, size, price range, brand and sort order can generate millions of URL variations from a few thousand products. URLs like /shoes?color=red&size=10&sort=price and /shoes?size=10&color=red&sort=price may render identical content with different URLs. Each one consumes a crawl slot. If left unchecked, bots will spend the majority of their budget crawling duplicate filter combinations instead of actual product pages.

Redirect chains

When URL A redirects to URL B, which redirects to URL C, which finally reaches URL D, the bot has consumed four crawl slots for a single destination. Chains often accumulate during site migrations, CMS changes or URL restructuring. Google follows up to about 10 redirects before giving up, but every hop is a wasted request.

Soft 404 pages

A soft 404 is a page that returns a 200 OK status but displays content that says "Page not found" or shows an empty product listing. Bots crawl these pages, download the full HTML and then have to figure out that the content is worthless. Real 404 or 410 status codes let the bot immediately move on. Soft 404s waste both bandwidth and processing time.

Duplicate content without canonicals

Session IDs in URLs, www vs non-www variants, HTTP vs HTTPS versions, trailing slashes, case variations—all of these create duplicate entry points to the same content. Without proper canonical tags and redirects, the bot crawls each variant separately, fragmenting your budget across identical pages.

Infinite spaces and calendar traps

Some CMS configurations create calendar widgets or pagination patterns that generate an effectively infinite number of URLs. A calendar that lets you click into the year 2099 creates thousands of empty pages. Similarly, a search function that exposes its results as crawlable URLs can generate limitless low-value pages from bot-triggered queries.

Large media files and unoptimized resources

When bots download unnecessarily large images, unminified JavaScript bundles or embedded videos on every page, the transfer time reduces how many pages they can reach within the crawl window. This is especially impactful when the heavy resources are not essential for understanding the page content.

How to optimize crawl budget

Build a clean URL architecture

Start with the foundation. Every indexable URL should serve unique, valuable content. Implement these structural controls:

- Canonical tags on every page, pointing to the preferred version of the content.

- Parameter handling: use

robots.txtto block known junk parameter combinations, or implement server-side logic that returns a canonical tag or 301 redirect for parameter variations. - Consistent URL format: pick one convention (lowercase, no trailing slash, HTTPS, with or without

www) and enforce it with redirects. - Pagination with purpose: use

rel="next"andrel="prev"where supported, and ensure paginated pages are discoverable through internal links and sitemaps.

Fix redirect chains

Audit your redirects and flatten chains so that every redirect is a single hop from source to final destination. After a site migration, update internal links to point directly to the new URLs rather than relying on redirect chains. Monitor for new chains as content evolves—they tend to accumulate silently.

Return proper HTTP status codes

Deleted pages should return 404 (not found) or 410 (gone). Moved pages should return 301 (permanent redirect). Temporarily unavailable pages should return 503 with a Retry-After header. Never serve a 200 status code for content that does not exist—this creates soft 404s that waste crawl budget and confuse the index.

Improve server response time

The faster your server responds, the more pages bots can crawl within the rate limit. Key levers include:

- Server-side caching: serve pre-rendered HTML for bot requests when possible.

- CDN deployment: reduce latency by serving responses from edge nodes close to the bot's location.

- Database optimization: slow queries that affect page generation time directly reduce crawl throughput.

- HTTP/2 support: multiplexed connections improve efficiency for both bots and users.

- Monitoring TTFB: keep Time To First Byte under 200ms for critical pages.

Curate your XML sitemaps

Sitemaps are a direct signal to bots about which URLs matter. Optimize them by:

- Including only indexable, canonical URLs—never list redirects, noindex pages or blocked URLs.

- Segmenting sitemaps by content type (products, articles, categories) to help bots prioritize.

- Setting accurate

<lastmod>dates—only update when the content genuinely changes. False freshness signals erode trust. - Keeping sitemaps under 50,000 URLs or 50MB per file, using a sitemap index for larger sites.

- Referencing sitemaps in your

robots.txtfile.

Strengthen internal linking

Pages that are well-connected through internal links get crawled more frequently and more quickly. Orphan pages—those with no internal links pointing to them—may never be discovered even if they appear in a sitemap. Use breadcrumb navigation, related content modules, footer links and contextual links within body content to create a dense, logical link graph.

Block crawling of low-value areas

Use robots.txt to disallow paths that add no search value: admin panels, login pages, internal search results, shopping cart pages and print-friendly duplicates. Be careful not to block CSS, JavaScript or images that bots need for rendering—this can hurt your rankings rather than help.

Monitoring with server logs

The most reliable way to understand how bots actually interact with your site is through server log analysis. Search Console provides useful data, but logs give you the complete picture.

What to extract from logs

- Bot identification: filter requests by User-Agent to isolate Googlebot, Bingbot, and other crawlers.

- Pages crawled per day: track total bot requests over time to spot trends and anomalies.

- Status code distribution: what percentage of bot requests return 200, 301, 404, 5xx?

- Response time per request: identify slow pages that act as bottlenecks.

- Most and least crawled sections: compare bot attention against business priority. If bots spend 60% of their visits on filter pages and 5% on product pages, you have a budget allocation problem.

- Crawl frequency by URL: how often are key pages revisited? Are new pages discovered promptly?

Setting up log-based monitoring

Forward your access logs to a log management platform (ELK stack, Datadog, Splunk or even a simple script that parses the access log). Build dashboards that highlight:

- Daily crawl volume by bot.

- Top 50 most crawled URLs.

- URLs returning errors to bots.

- Average and p95 response times for bot requests.

Review these dashboards weekly. Sudden drops in crawl volume can indicate server issues, accidental robots.txt changes or penalties. Spikes may signal a bot loop or a crawl trap.

Crawl budget and Spider.es

Spider.es simulates how search engine bots experience your site. It follows links, reads robots.txt, checks status codes, measures response times and maps your internal link graph—giving you the same view a crawler has. Use it to:

- Identify redirect chains and fix them before they accumulate.

- Find orphan pages that bots cannot reach through normal crawling.

- Detect soft 404s and misconfigured status codes.

- Verify that your sitemaps contain only clean, indexable URLs.

- Measure server response times under simulated bot load.

Final thoughts

Crawl budget is not a number you can look up in a dashboard—it is the emergent result of your server performance, content architecture and the signals you send to search engines. Every redirect chain, every parameter URL, every soft 404 is a visit wasted on something that adds no value. Fix the leaks, strengthen the signals and monitor the results through logs. When every bot visit counts, every page that gets crawled should deserve to be there.